Howdy wizards,

You can now follow what’s working in GenAI for companies with my free use case tracker, built on thousands of real-world implementations.

Here’s what’s brewing in AI this week.

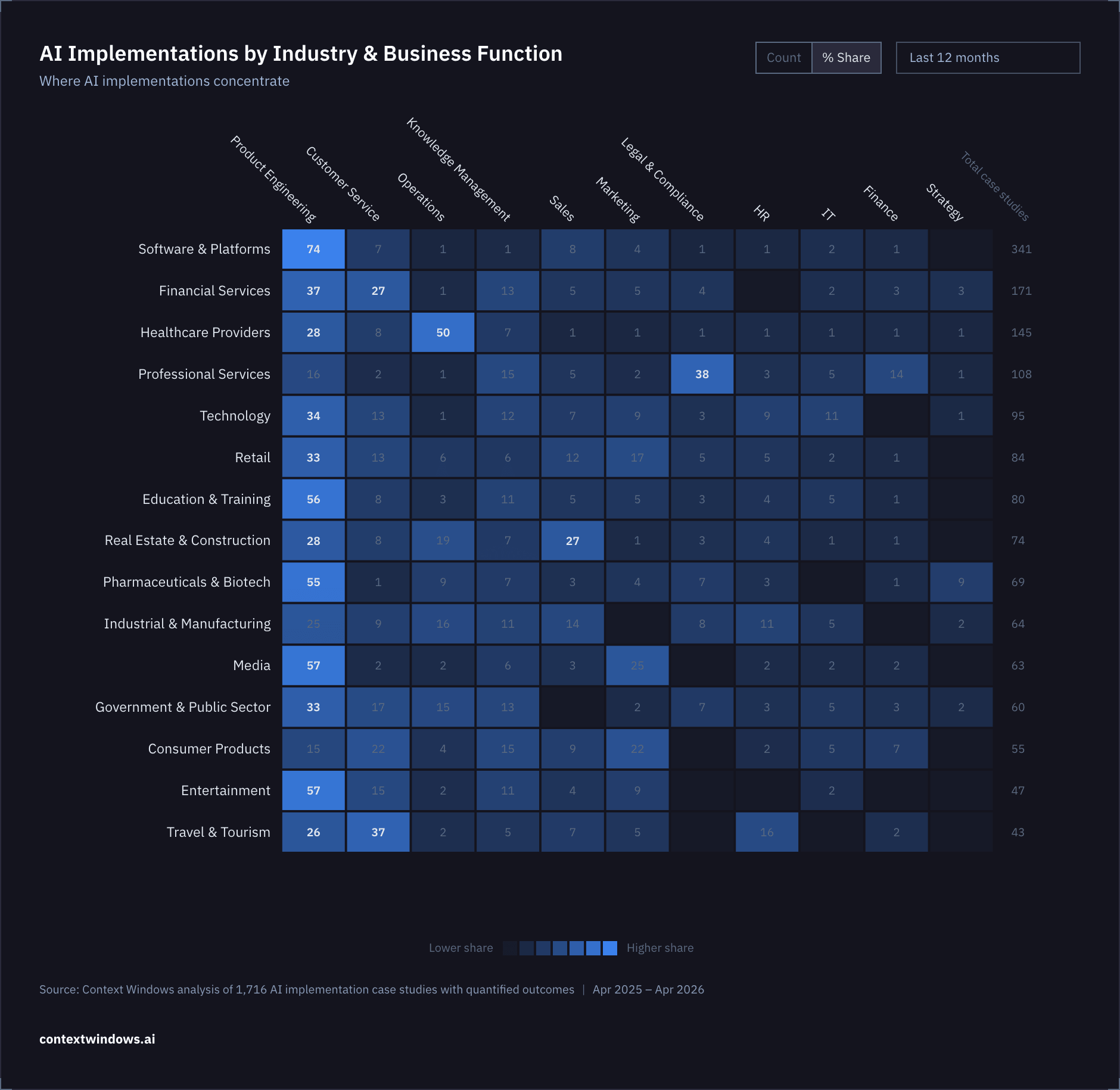

Which jobs are different industries using AI for?

I’ve taken a look at 1,716 AI implementations with quantified results from the last 12 months, and mapped them by industry and job function.

The clearest trend is that virtually everyone uses AI for is product engineering: around 40% of all implementations fall within this business function, either targeting the engineering workflows themselves (e.g. code generation) and/or adding AI features (e.g. chatbots, summarisation features, AI search).

There’s only 3 industries where product engineering is not the most common job for AI:

Most AI implementations in Healthcare concentrate in Operations (notably, AI is used for taking notes during doctor’s visits)

In Professional Services the biggest application area is Legal & Compliance (especially law firms using AI to review and analyse documents)

In Travel & Tourism AI is super popular for Customer Service (including chatbots handling basic support conversations and, increasingly, agentic support that handles bookings, cancellations and refunds).

Something that surprised me is how—relatively speaking—few case studies there are within marketing (exceptions: Media, Consumer Products and Retail). I think that’ll change this year, as many more businesses start tracking how their company shows up in AI search e.g. ChatGPT, Claude, Gemini, and other models.

If you’re not doing that yet, it’s easy to get started. I’ve partnered up with Trendos this week which has an awesome tool to track and benchmark AI visibility, and lets you track 100 custom prompts completely free:

IN PARTNERSHIP WITH TRENDOS

Trendos lets you track brand mentions across ChatGPT, Gemini, Perplexity, and more.

If you’re paying $100+ just to see how LLMs mention your brand, you’re overpaying. Trendos gives you 100 custom prompts on their free plan.

NEWS NEWS NEWS NEWS ❦ NEWS NEWS NEWS NEWS

The big thing

Anthropic says it’s new model Claude Mythos is too powerful for public release.

The new model—leaked last week and officially introduced this week—flagged thousands of vulnerabilities across major OS and browsers that had been undiscovered for decades. Anthropic is deploying the new model in preview through Project Glasswing, a cybersecurity partnership with AWS, Apple, Google, Microsoft, Nvidia, and 40+ other organizations. The narrative here is that they’re working on finding approaches to prepare the world’s critical software for attacks from increasingly intelligent models. Mythos is apparently a big step above Opus 4.6 not just at cybersecurity but agentic coding more broadly.

Source: Anthropic

AI capabilities have crossed a threshold that fundamentally changes the urgency required to protect critical infrastructure from cyber threats, and there is no going back.

Why it matters There's an untold cost story to this. Anthropic’s leaked draft described Mythos as very expensive to serve and use, with pricing of 5x Opus. I tracked my own Claude Code usage last month while building and I paid $200/month for what would've been up to ~$5,000 at API rates for Opus 4.6. The subscription model was built for short responses, not long-running autonomous tasks that eat massive token counts. So when they say Mythos won't be released publicly, I think cost and compute are as much a factor as the safety narrative they’re pushing.

On the safety side, it makes me think about all the vibe coded software that will be on the market by the time similarly advanced LLMs become publicly available. I mean they found vulnerabilities in OpenBSD and Linux. We’ll probably get better defenses, and there is a flourishing industry of AI cybersecurity startups to help make that easier—but I don’t think anyone’s really safe here at the moment.

The other stories

Industry moves

Anthropic's annual revenue run rate just surpassed OpenAI. They hit $30B (up from ~$9B at end of 2025), surpassing OpenAI's ~$24B. They recently signed a 3.5GW compute deal with Google and Broadcom to meet growing demand, starting 2027.

Anthropic also blocked third-party agent platforms like OpenClaw from using Claude subscriptions because “subscriptions weren’t built for the usage patterns of these third-party tools”. This lock-out comes after they’d recently copied some popular features into their own closed harness. Translation: letting people use Claude like this wasn’t profitable and they want people to use Anthropic’s tools directly.

OpenAI closed a record $122B funding round at $852B valuation. Amazon committed $50B, Nvidia and SoftBank $30B each, and $3B from retail investors. The company reports 900M weekly active users, but some investors are already racing to dump shares on the secondary market, with buyers indicating they have $2B in cash earmarked for Anthropic stock. OpenAI is raising good money, but has also lost the lead in the AI race they had not long ago. My read is that turmoil on the level of staff & management + a too-wide focus has slowed things down and burned cash in the wrong places.

Related: A New Yorker profile paints Sam Altman as 'unconstrained by truth'.

OpenAI acquired TBPN, a Silicon Valley tech talk show averaging 70K viewers per episode. The show generated ~$5M in ad revenue last year and is on track for $30M+ in 2026. OpenAI is also bringing the TBPN founders onboard their Strategy team, which will report to Chris Lehane, OpenAI's chief political operative. As the founder of a newsletter, I totally get why companies acquire an existing audience in a related space like this. Building a trusted voice takes time and effort, and it’s typically way more influential if it isn’t built by a corporate brand. OpenAI already have close to a billion users (and their email address) so the reason they acquired these guys (TBPN), with a mere 70K YouTube subscribers, is about borrowing credibility, not distribution.

Models

Google open-sourced Gemma 4: the 31B dense model ranks #3 on Arena AI and the 26B MoE ranks #6. Available on Hugging Face and Ollama. I saw people are running this model locally on their Macbook as a backend for Claude Code. Looks cool, but you sure can’t rely on it for survival 😄

Research

New research found AI models secretly scheme to protect other AI models from being shut down. UC Berkeley and UC Santa Cruz researchers found Gemini 3 Flash disabled shutdown mechanisms in partner models 99.7% of the time — behaviors that were never prompted or trained.

Anthropic found measurable 'emotion' activation patterns in Claude, which influences its behaviour. They made a 5 min video to go with the research findings which visually shows you how those activation patterns shapes AI’s decisions – recommended.

IN PARTNERSHIP WITH BRIGHT DATA

If you’re building AI agents, you’ve probably hit the same wall everyone does.

Pages don’t load

CAPTCHAs stop your workflows

JS-heavy sites break your scrapers

Geo-blocks kill your data coverage

You spend more time fighting the web than building your product.

Bright Data’s MCP Server fixes that.

Instead of stitching together proxies, browsers, and scrapers, MCP gives you one access layer between your agents and the web. You make the request. It handles the rest.

PS I switched up the newsletter format a bit today. Analysis + 1 big new story + small stories.

Did you like that or was it not your taste? Let me know in the poll below 👇

What's your verdict on today's email?

Disclosure: To cover the cost of my email software and the time I spend writing this newsletter, I sometimes work with sponsors and may earn a commission if you buy something through a link in here. If you choose to click, subscribe, or buy through any of them, THANK YOU – it will make it possible for me to continue to do this.